News

The effect of images on the ability of Large Language Models to predict human judgements of how acceptable sentences are.

Centre for Fundamentals of AI and Computational Theory19 March 2026

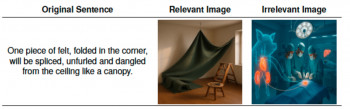

A new paper, "Predicting Sentence Acceptability Judgments in Multimodal Contexts", by a team including Shalom Lappin from the Centre for Fundamental AI and Computational Theory, explores the effect of images on the ability of Large Language Models (LLMs) to predict the ratings humans give to sentences over how acceptable they are. For example, how acceptable are each of these sentences (given an image to give context)?

- "But the answer seems to be no: Reeves lets it be known she requests no costings on raising the three forbidden taxes." (original sentence)

- "But the reply does not seem: reeves suggests that it would not cost to raise the three banned taxes." (modified sentence)

The team found that unlike when a written context is given, images that provide context had little if any impact on the ratings given by humans of how acceptable the sentences were.

Different kinds of LLMs were able to predict human acceptability judgments very accurately. However, in general, their performance was slightly better when images are removed! Moreover, the distribution of LLM judgments varies among models. The LLM Qwen resembled human patterns, but others diverged from them.

This experimental work suggests that a larger gap exists between the internal representations of LLMs and their generated predictions when images are present to give visual contexts. It suggests interesting points of similarity and of difference between the way humans and LLMs process sentences in multimodal contexts.

Read the paper "Predicting Sentence Acceptability Judgments in Multimodal Contexts" on arXiv

People: Shalom LAPPIN

Contact: Shalom LappinEmail: s.lappin@qmul.ac.uk

Updated by: Paul Curzon